A developer has published a Python script on GitHub that “plays” 45 rounds of “Who Wants to Be a Millionaire” using an AI model.

In each round, 15 questions are asked and then an average is calculated. The AI had no lifelines. 😉

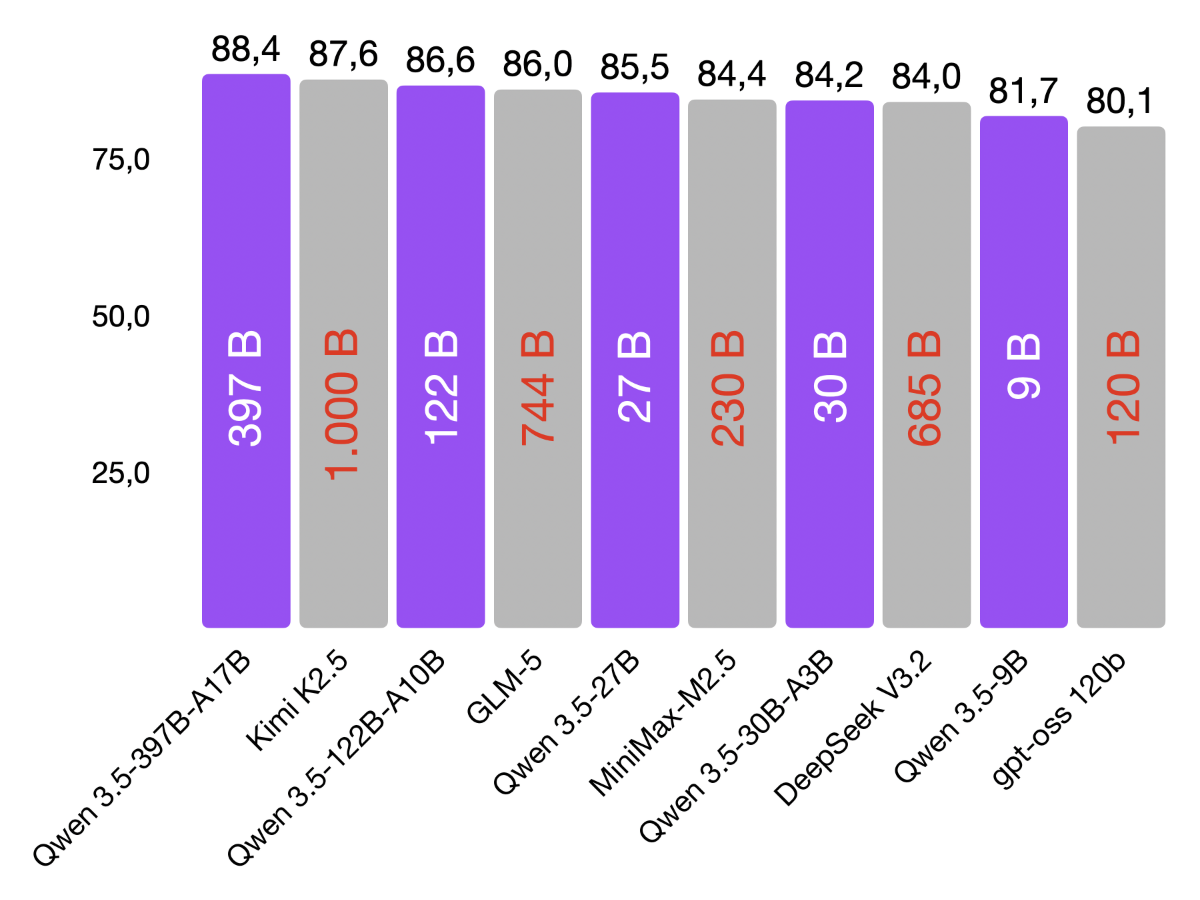

Among the “small” open source models that run on my MacBook with LM Studio, OpenAI gpt-oss (20b), Mistral AI Small 3.2 (24B), and Qwen 3 (30B) came out on top.

gpt-oss would have become a millionaire 3 times out of 45 attempts. And won an average of €80,177.

The situation is different for the major proprietary top models. OpenAI GPT-5 would have become a millionaire 36 times over with an average profit of €813,783, and Google’s Gemini 2.5 Pro would have become a millionaire 33 times over with an average profit of €742,004.

| LLM | Größe | Durchschnittsgewinn | Millionär |

|---|---|---|---|

| OpenAI gpt-oss | 20B | 80.177 € | 3 |

| Mistral Small 3.2 | 24B | 63.812 € | 2 |

| Qwen 3 | 30B | 52.216 € | 2 |

| Google Gemma 3 | 12B | 24.291 € | 1 |

| Meta Llama 3.1 | 8B | 23.904 € | 1 |

| Microsoft Phi 4 | 14B | 5.884 € | 0 |

| Qwen 3 | 4B | 948 € | 0 |

| IBM Granite 3.2 | 8B | 620 € | 0 |

| Google Gemma 3 | 4B | 156 € | 0 |

| Meta Llama 3.2 | 3B | 125 € | 0 |

I find the figures entertaining and relevant in the private sphere, where AI serves as an answer machine for everyday questions. In a professional context, I am interested in other characteristics of AI models:

- How well does the model understand the context I provide?

- Does the model select the right tool for support and pass on the correct parameters?

- How well does it follow my instructions?

- How much does it hallucinate? …