On-device AI processes data directly on the device, enabling low latency, privacy, and offline capability. If all the necessary information is available as context (e.g., a complete text that the model is to process), compact, local models are often sufficient. One example is open-weight models with around 4 billion parameters, such as Qwen3-VL-4B-Instruct. These can be run in real time on modern hardware (NPUs, GPUs, or Apple chips) and deliver fast responses without a cloud connection. Typical tasks with complete context include translations, style transfer (e.g., rewriting a given text), text classification, or local code completion. Lightweight translations and simple document editing can be done on-device. Thanks to hardware acceleration (NPUs/GPU), such models also benefit from lower power consumption and data privacy, as sensitive data does not leave the device.

Translations (on-device): Small models can reliably translate short sentences or phrases, especially when the context is included in the prompt.

Adapt writing style: By feeding entire text passages in context, local models can change the style and tone of a text (e.g., neutral → promotional). This falls under summarizing/rewriting local content.

Text classification: Domain-specific classifiers efficiently categorize documents or emails by topic or content. Small LMs are fast and accurate here if the relevant data has already been transferred.

Code completion: Local models such as Phi-3.5 Mini or Qwen3-4B can help developers directly with typing suggestions and code fragment completion. For short code snippets, 4–8 billion parameter models running locally are often sufficient.

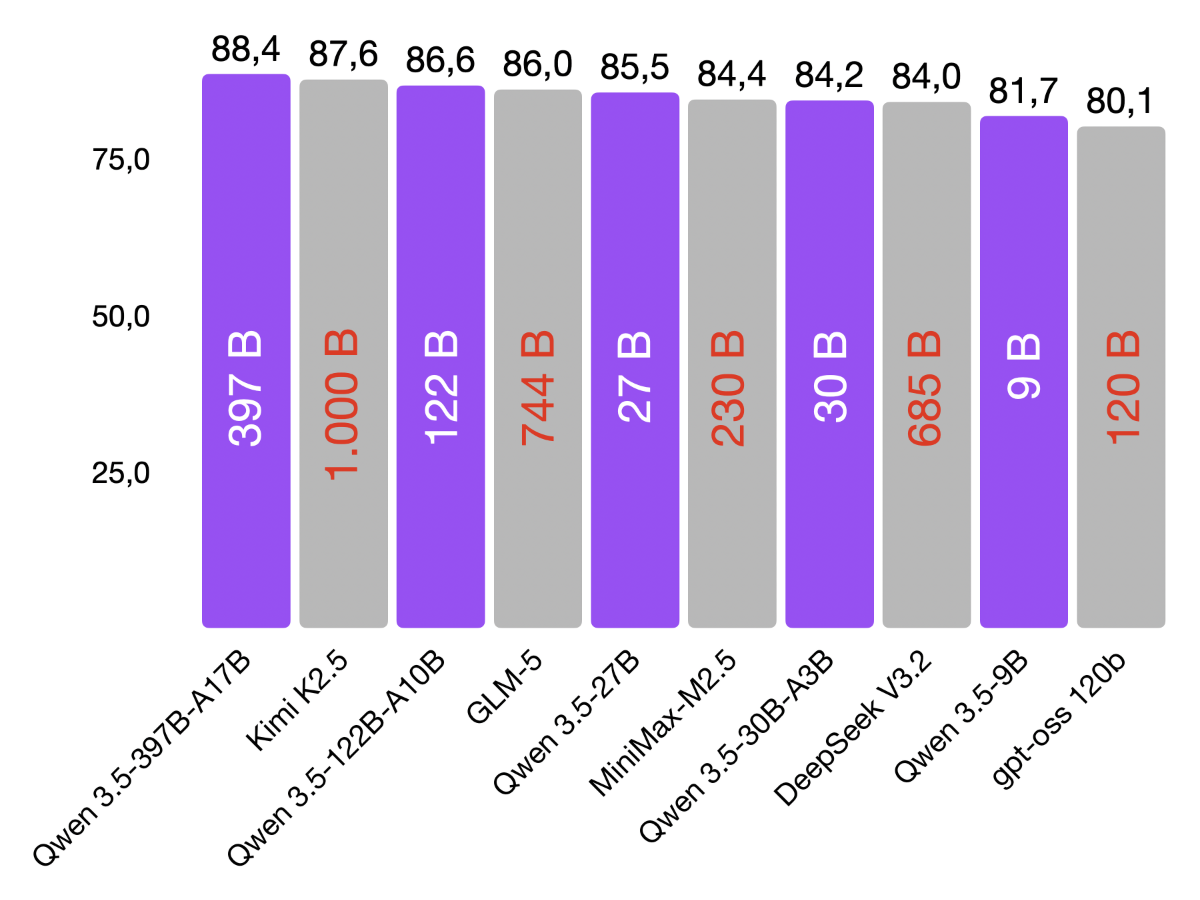

Large cloud LLMs for open-ended tasks However, if complete input data is not available or the tasks require more free creative thinking, it is worth turning to large cloud LLMs (e.g., GPT-4 from OpenAI, Google Bard/Gemini, or Anthropic Claude). These models have been trained on hundreds of billions of text data and can retrieve knowledge “on their own.” They excel at open-ended questions and complex generations that go beyond the context provided. For example, they are ideal for:

Complex code generation: When developing entire applications or complex programs (e.g., a complete web app or game development), large LLMs offer more creativity and error resistance. They “understand” better contexts and can write extensive code from just a few keywords.

Longer texts & content creation: Generating long articles, reports, or creative texts is easier for larger models. They can write coherently across multiple paragraphs and also perform stylistically demanding writing tasks.

Answering questions (Q&A): LLMs are ideal for general knowledge questions or extensive research questions (when the input does not contain all the necessary facts). They draw on the world knowledge they have learned from their gigantic training corpus.

Dialogue/sparring: They play open-ended conversations and “sparring partner” roles with ease. If you want to interactively discuss ideas or debate arguments with AI, a cloud LLM usually provides the most lively conversation. Large models also offer features such as long-term memory, multimodal input and output (image/audio), and ongoing updates.

In short: Small, specialized models are smart enough when all relevant data can be entered as a prompt. Large models excel when open-minded thinking or pre-formed knowledge is required. According to current comparisons, SLMs work at lightning speed and with little effort for simple queries (translation of short sentences, basic FAQs, simple classification), while LLMs master complex tasks (complex Q&A, long texts, creative writing).

Personal assessment

My rule of thumb: Can I provide the AI model with all the information in advance? If so, I use a local 4B model (for example, Qwen3-VL-4B-Instruct). Processing remains private and fast. I typically use such models for translations, style changes, or classifications of existing data. If the context is missing or if it’s a more open creative task, I fall back on large cloud LLMs. For complex code generation, detailed text writing, or interactive dialogue, their broader general knowledge and capacity are simply better. This hybrid approach combines the speed and data security of local models with the versatility of cloud AI.