Qwen has released the small series of the Qwen3.5 model range: Qwen3.5-0.8B | 2B | 4B | 9B

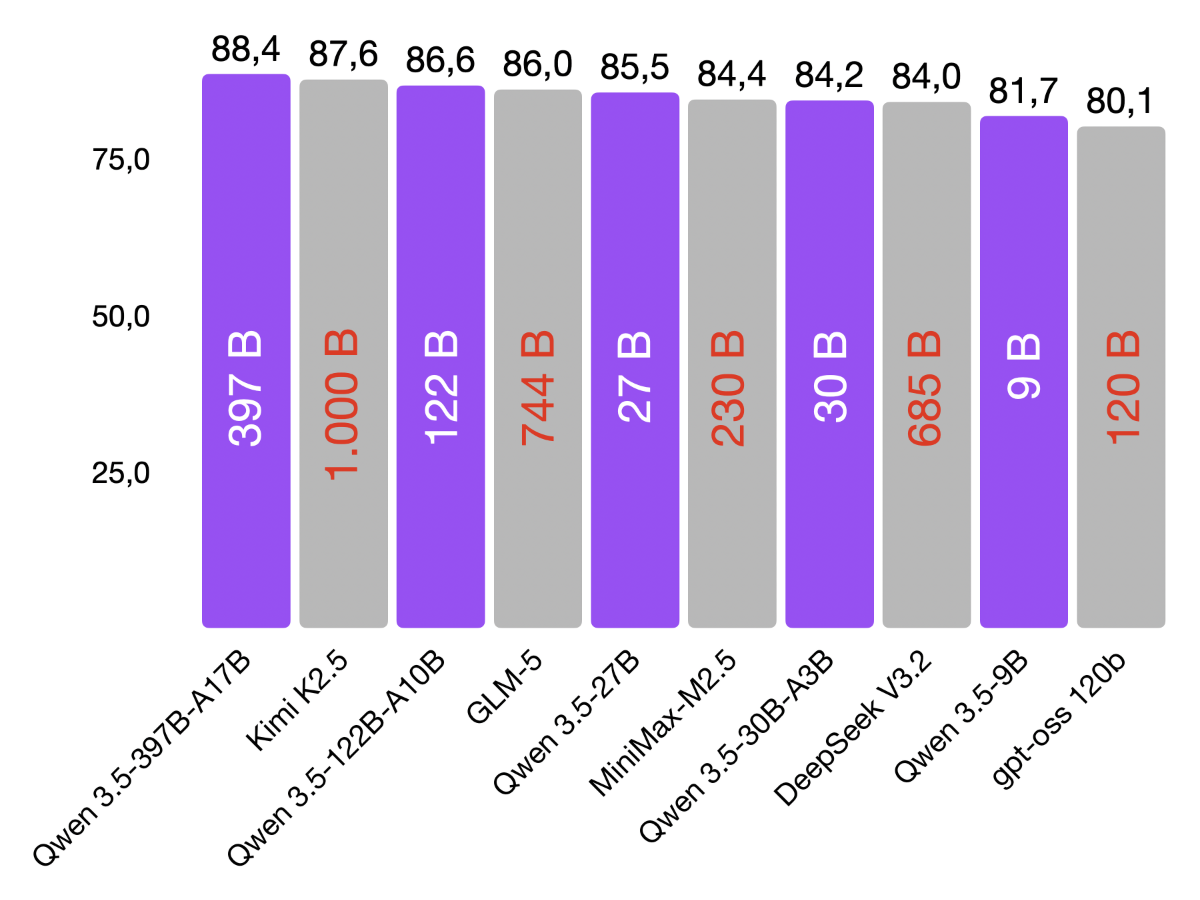

I am particularly impressed by the 4B and 9B models. The benchmark results are impressive (GPQA Diamond Benchmark): ✳️ Qwen3.5-4B beats OpenAI’s GPT-OSS-20B – even though it is 5x smaller ✳️ Qwen3.5-9B beats OpenAI’s GPT-OSS-120B – even though it is more than 13x smaller

This is exactly the kind of efficiency that makes on-device AI so exciting. I connected both models (with Thinking Mode enabled) to PicoClaw (OpenClaw alternative) and was surprised. The agentic capabilities work with both models.

The 4B is not yet sufficient for coding. The 9B delivers acceptable results, but for serious coding I would still opt for the Qwen3.5-35B-A3B or Qwen3.5-27B.

The predecessor, Qwen3-4B, has been running in many of my n8n workflows for a long time. Now I’m sending it into well-deserved retirement and handing over to its successor 👋

This week, I’ll continue testing the Small Series. Stay tuned!

./llama-server \

--model Qwen3.5-9B-UD-Q4_K_XL.gguf \

--ctx-size 131072 \

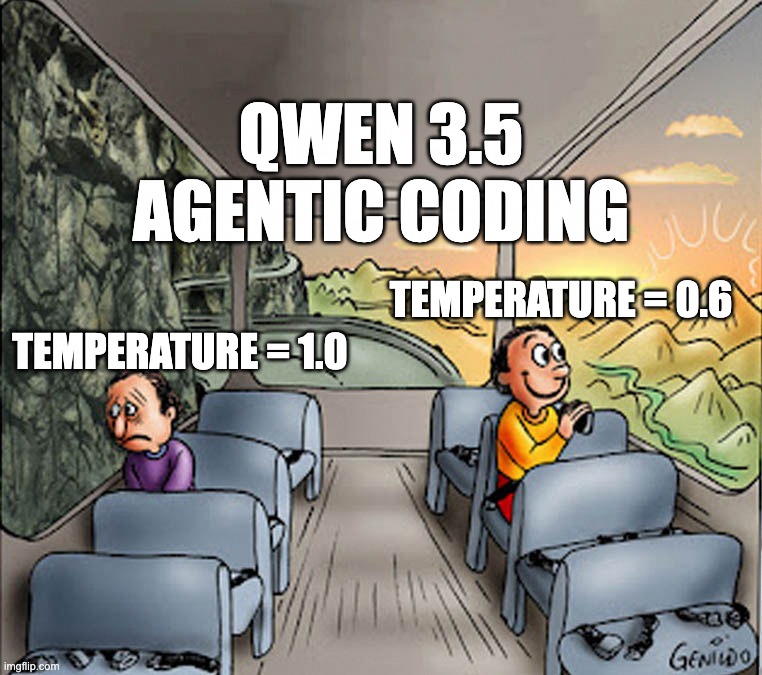

--temp 0.6 \

--top-p 0.95 \

--top-k 20 \

--min-p 0.00 \

--repeat-penalty 1.0 \

--alias "Qwen3.5" \

--port 8001 \

--chat-template-kwargs '{"enable_thinking":true}'