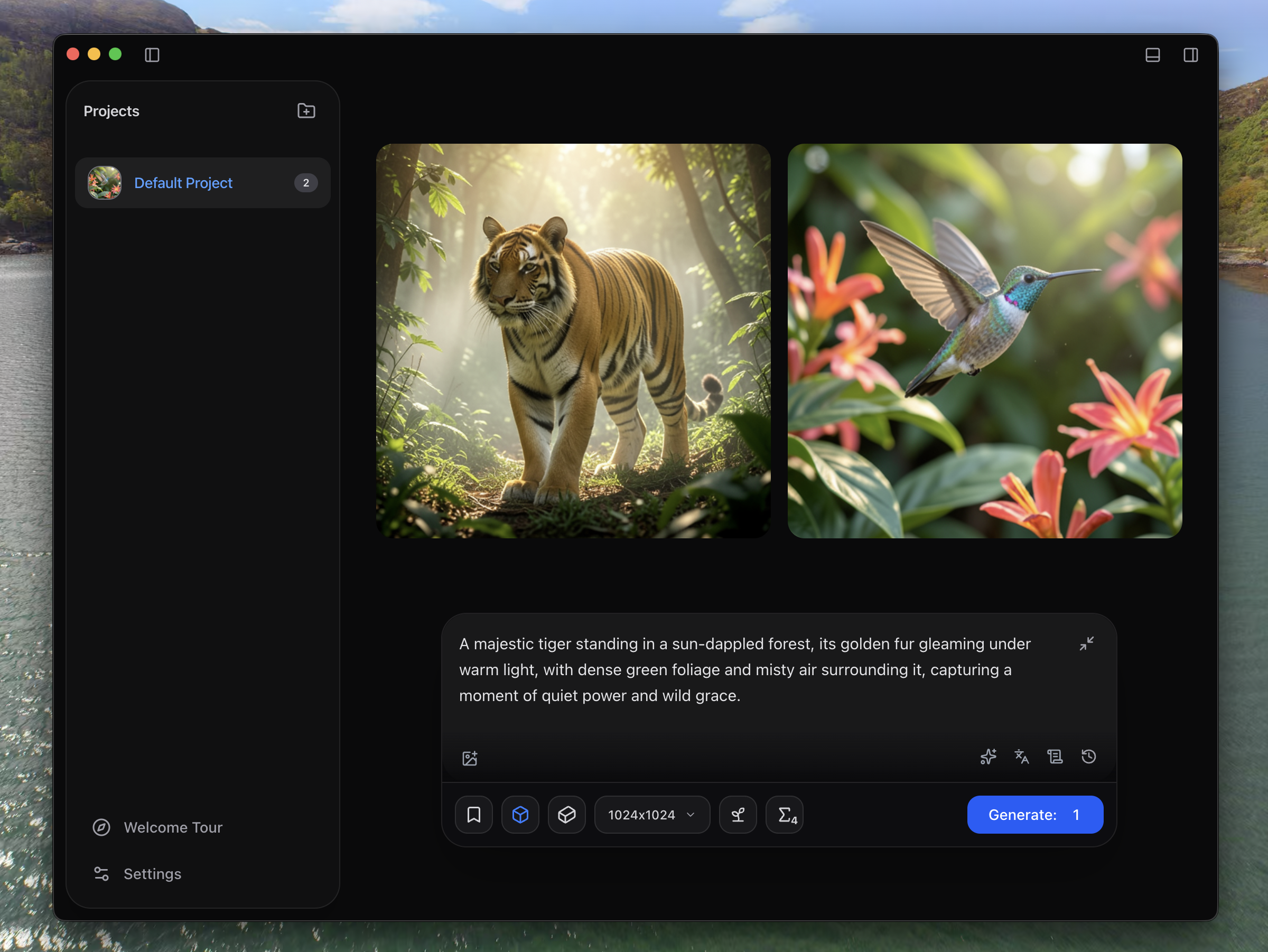

Forget everything I told you about Ollama or llama.cpp. On Mac, you have to use LM Studio for LLM inference. Using MLX simply doubles the speed while reducing memory consumption.

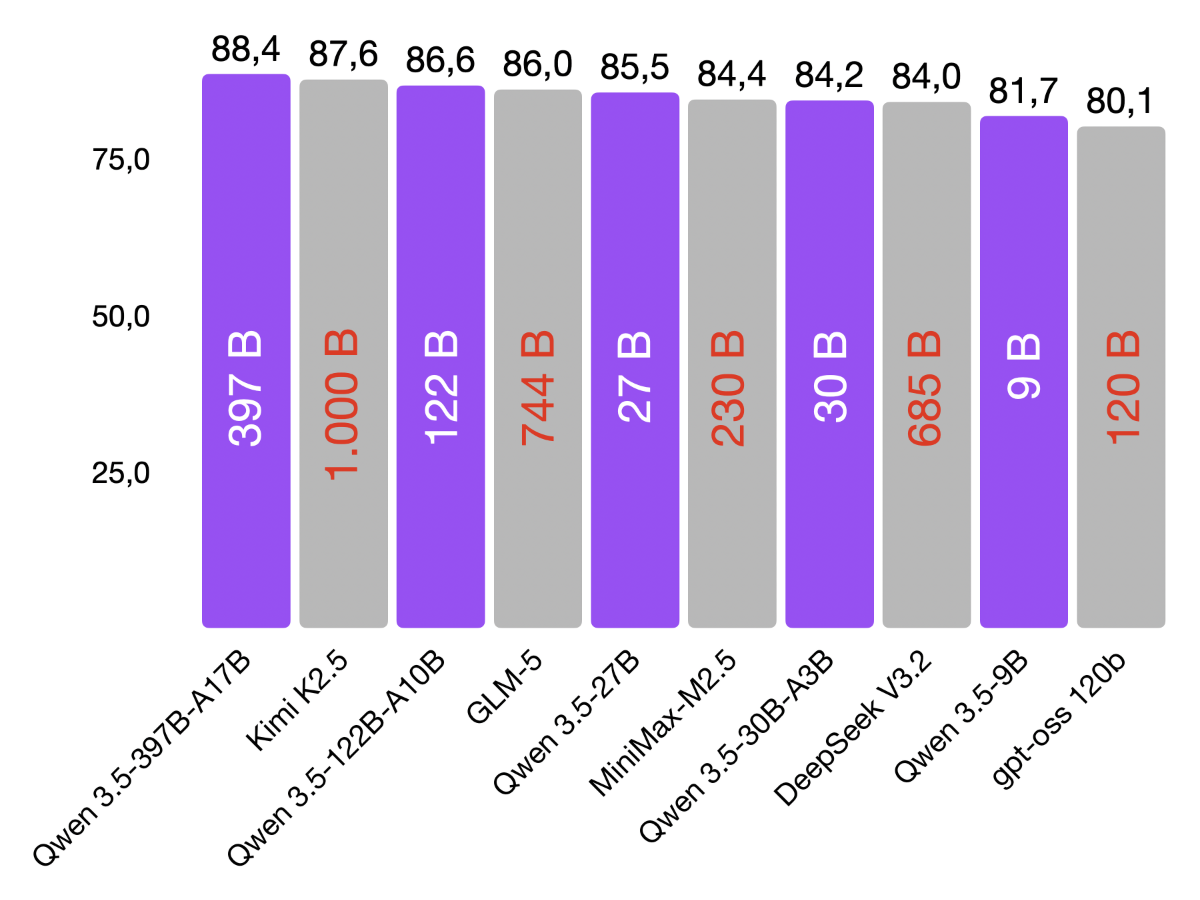

Here are some examples: With llama.cpp, I ran the Qwen3.5-35-a3b model (GGUF-4Bit) on my M3 Pro at about 25 tokens per second. With LM Studio, the Qwen3.5-35-a3b model (MLX-4Bit) ran at about 50 tokens per second on my M3 Pro.

In addition, the MLX model uses less memory and less energy during inference. Twice as fast and more economical. That’s amazing. Don’t make the same mistake I did and use LM Studio right away.